SaaS Multi-tenant application in EKS

Multi-tenant SaaS systems offer significant advantages such as cost efficiency, scalability, and operational agility. In this post, I’ll walk through a practical architecture for building multi-tenant SaaS applications on Amazon EKS, focusing on tenant onboarding, isolation, and the close relationship between business requirements and technical design. While some AWS services and best practices have evolved since this was first written, the core SaaS principles and architectural trade-offs discussed here are still highly relevant today.

Onboarding new Tenant

A smooth onboarding experience is critical for any SaaS product. From the customer’s perspective, the goal is simple: provide minimal input during registration, choose a pricing plan, and start using the product immediately.

Behind the scenes, however, onboarding a new tenant is a non-trivial process. The platform must provision tenant-specific resources, configure identity and access, apply the selected pricing model, and prepare the application environment all without exposing this complexity to the end user.

When building multi-tenant SaaS systems, architectural decisions around tenant isolation and automation directly affect how scalable and flexible this onboarding process can be.

What Happens During Tenant Onboarding

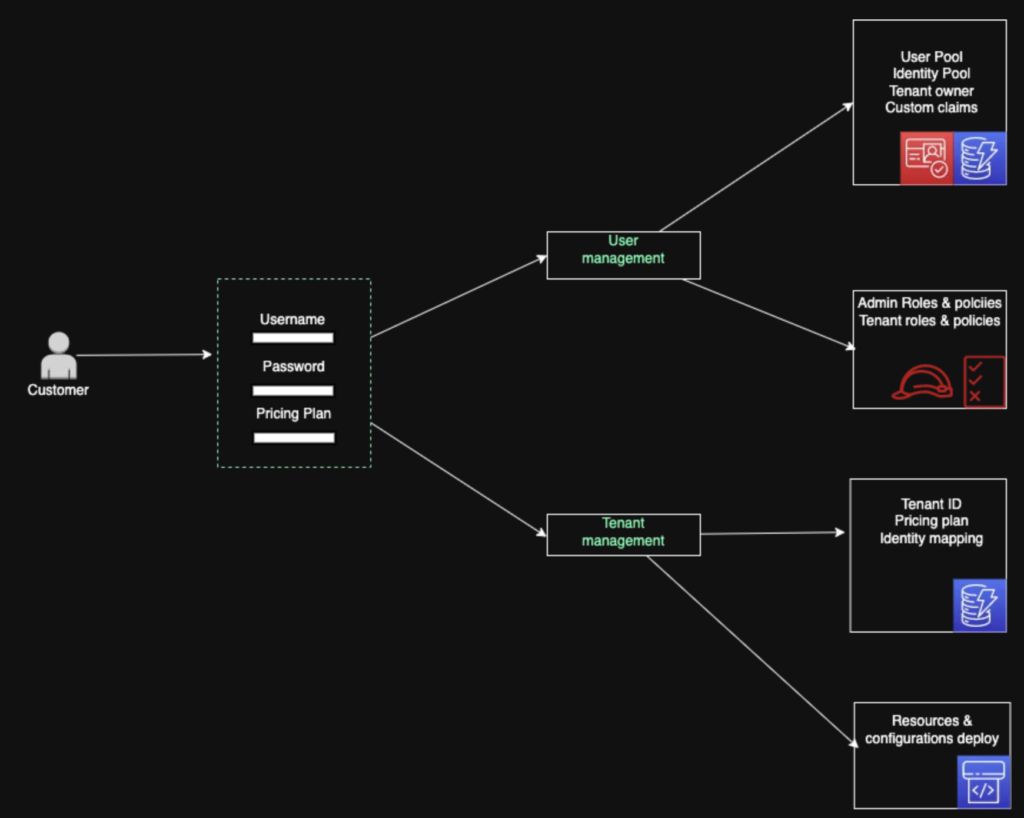

Once a new tenant registration is initiated, several processes are triggered in parallel.

At the top of the diagram is the User Management component, which is responsible for identity and access. It typically relies on three core AWS services: Amazon Cognito, DynamoDB, and IAM. Cognito handles user authentication through dedicated User and Identity Pools, while DynamoDB stores user-related metadata such as the tenant owner, custom claims, and identity mappings. As part of this process, a tenant administrator (owner) is automatically created and associated with the newly provisioned tenant.

On the lower part of the diagram, Tenant Management focuses on tenant-level metadata. This includes the Tenant ID, selected pricing plan, and user-to-tenant mappings. DynamoDB can be shared between user and tenant management components, with data logically separated into different tables to maintain clear ownership boundaries.

So far, this covers identity and metadata — but what about the actual application resources?

Since this architecture is built on Amazon EKS using a namespace-per-tenant isolation model, infrastructure provisioning is handled through an automated pipeline. When a new tenant is onboarded, Amazon CodePipeline deploys all required components into a dedicated Kubernetes namespace. This can include application microservices, Kubernetes resources such as Pods, Services, Ingresses, Persistent Volumes, RBAC roles, Horizontal Pod Autoscalers, and additional AWS services depending on the selected pricing plan — for example DynamoDB tables, S3 buckets, or CloudFront distributions.

This unified deployment path significantly reduces operational complexity compared to silo-based isolation models, where each tenant might require a fully custom infrastructure stack. At the same time, it still allows controlled customization. If a specific customer requires an additional component it can be provisioned conditionally as part of the onboarding workflow.

To make this more concrete, imagine a multi-tenant SaaS platform for music distribution. Each tenant represents a content owner—such as a record label or independent artist—who manages their own music catalog. The tenant owner has full administrative control and can invite additional users with different permissions, such as content editors or read-only users. While end listeners may consume the content globally, each tenant’s data, configuration, and management capabilities remain isolated. The platform automatically provisions the required infrastructure so that content can be uploaded, managed, and delivered immediately after onboarding.

Under the hood

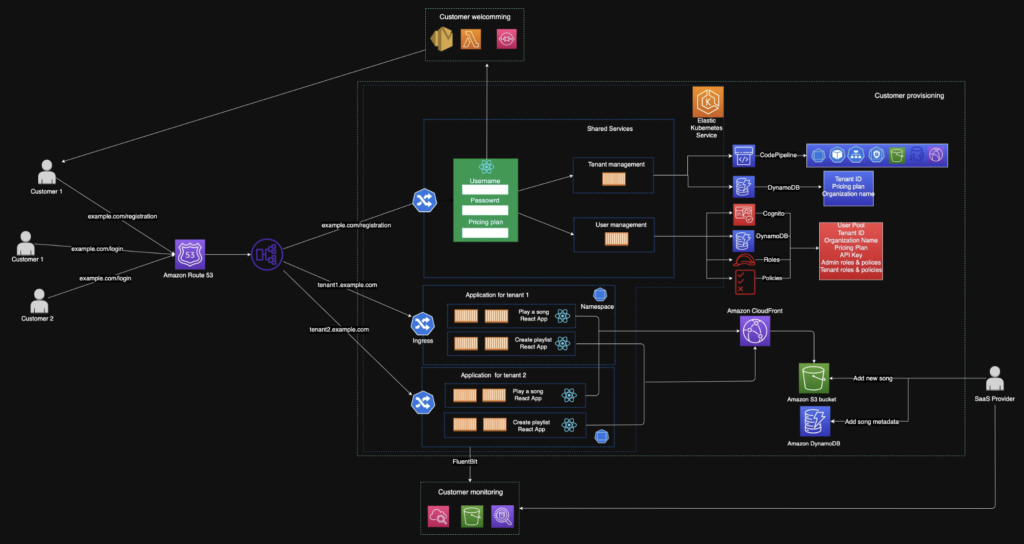

Now let’s take a closer look at what happens under the hood, specifically how traffic is routed, how applications are exposed, and how the platform handles monitoring at scale.

Microservices are deployed on Amazon EKS, but exposing those services securely and efficiently to the public requires several additional components working together. This is where routing, load balancing, and shared infrastructure limits become critical design considerations.

Public Access and Registration Flow

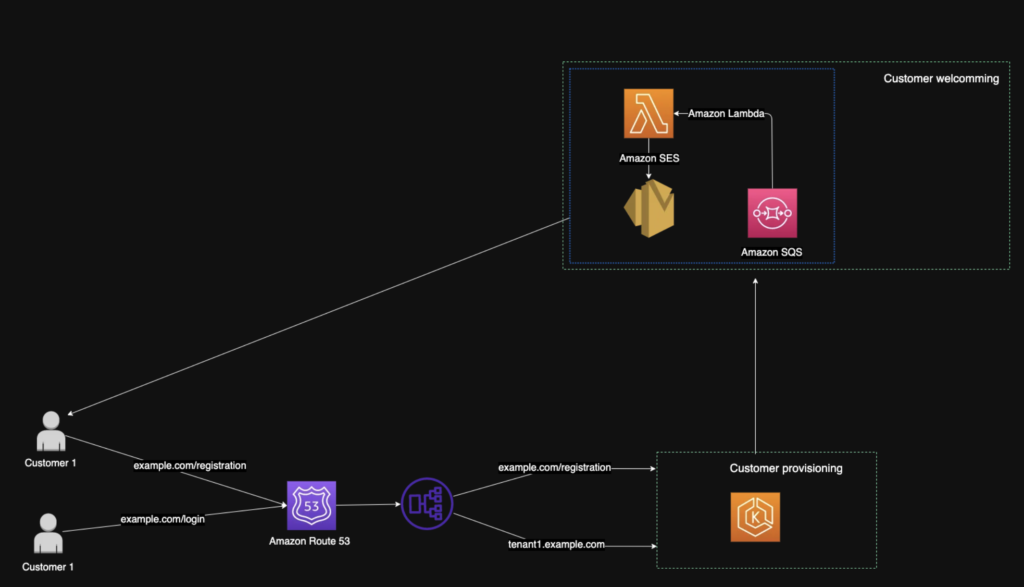

When a user accesses example.com/register, Amazon Route 53 resolves the domain name to an Elastic Load Balancer endpoint. The request is then handled by the load balancer, which routes it to the appropriate backend service running inside the EKS cluster. This public endpoint is used for registration and authentication before a tenant exists.

This design provides a single entry point for all tenants while keeping application logic fully decoupled behind the load balancer. However, when using shared components such as Application Load Balancers, service quotas must be considered early in the design phase. For example, ALBs have limits on target groups and listeners, which can directly influence how many tenants or services can be supported per cluster.

Once a tenant account is successfully created, the platform provisions the tenant environment and the user can access the application through a tenant-specific entry point (for example, a dedicated subdomain). At the same time, a customer welcoming workflow is triggered. This part of the system is implemented using serverless components—Amazon SQS, AWS Lambda, and Amazon SES—allowing it to scale independently from the core application. As tenant volume grows, SQS buffers onboarding-related events while Lambda processes them asynchronously, ensuring consistent performance even during traffic spikes.

Tenant Isolation

Note:

At the time this architecture was implemented, Kubernetes Ingress—most commonly backed by Ingress NGINX—was the standard approach for HTTP routing in Kubernetes clusters. Since then, the ecosystem has been evolving toward the Gateway API as a more robust and secure alternative. While the specific routing technology may change, the architectural principles described here remain the same.

Between the load balancer and the application workloads sits the routing layer, responsible for exposing HTTP and HTTPS endpoints and forwarding traffic to services running inside tenant-specific Kubernetes namespaces. Routing decisions can be based on paths or hostnames, but the key goal is to direct requests to the correct tenant context inside the cluster.

Each tenant—representing a content owner such as a record label or independent artist—operates within its own Kubernetes namespace. This namespace serves as the primary isolation boundary and contains one or more application components, along with tenant-specific configurations such as resource limits, RBAC rules, and network policies. While the application code is shared across tenants, configuration and enabled capabilities may vary depending on the selected pricing plan.

This model strikes a balance between isolation and operational efficiency. Tenants share the underlying cluster and infrastructure, while failures, resource consumption, and access control remain scoped to individual namespaces. This reduces blast radius and allows the platform to scale efficiently as the number of content owners grows.

Logging, Monitoring, and Observability

For observability, each tenant namespace can include a Fluent Bit sidecar container that collects logs from application Pods. Logs from all namespaces are forwarded into a shared observability pipeline using Amazon CloudWatch, Amazon Kinesis Data Streams, and Kinesis Firehose, and are ultimately stored in Amazon S3. This approach enables centralized log storage and analysis across all tenants.

While the observability infrastructure is shared, log data is enriched with tenant-specific metadata, allowing logs and metrics to be filtered, queried, and analyzed per tenant. With logs stored in S3, Amazon Athena can be used to investigate incidents, analyze usage patterns, and correlate technical signals with business behavior.

This centralized observability model gives SaaS operators a unified view of the entire platform while preserving logical isolation at the data level. It enables early detection of abnormal behavior and supports both operational and business decisions. For example, if one tenant consistently consumes significantly more DynamoDB capacity than others, this becomes immediately visible and actionable.

Shared Data and Performance Optimization

In the context of the music streaming example, DynamoDB is used to store metadata such as playlists and song references, while Amazon S3 stores the actual files. These resources are shared across tenants, but data is logically isolated using tenant-specific prefixes and partitioning strategies. Consistent use of prefixes is essential for both scalability and maintainability.

As the number of tenants and users grows, performance optimization becomes increasingly important. To reduce latency and improve user experience, frequently accessed content is cached using Amazon CloudFront. This ensures that popular media files are delivered efficiently regardless of user location.

Overall, this architecture provides SaaS providers with deep visibility into application behavior across tenants while enabling rapid iteration. Centralized monitoring, shared infrastructure, and automated provisioning make it possible to release features faster, respond to customer feedback more effectively, and adapt the platform as business requirements evolve.

Core SaaS components

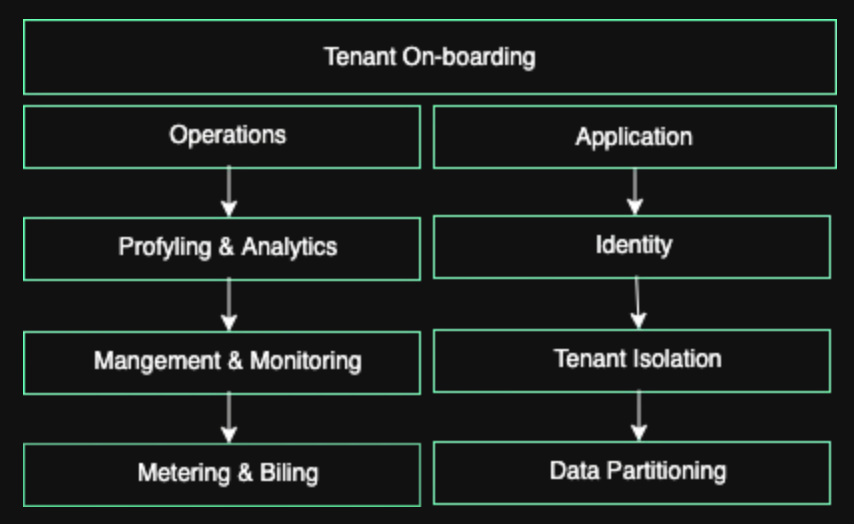

When designing a multi-tenant SaaS platform, it is useful to step back and look at the system through a higher-level lens. Beyond individual AWS services or Kubernetes resources, a SaaS architecture can be broken down into a small set of core components that together define how the platform operates, scales, and evolves.

At a high level, these components can be grouped into tenant onboarding, application, and operations. Each of them plays a distinct role, but they are tightly interconnected and must be designed together rather than in isolation.

Tenant onboarding is the entry point into the system. It is typically implemented as a public-facing microservice responsible for registration, pricing plan selection, and initial provisioning. While the external interface is intentionally simple, the internal workflow is often complex, involving identity setup, metadata creation, and automated infrastructure deployment.

In this architecture, tenant onboarding runs within the same EKS cluster but is logically isolated in its own namespace with dedicated resources. This keeps the onboarding process scalable while maintaining clear separation from tenant workloads.

The application layer focuses on identity, tenant isolation, and data partitioning. Identity is a foundational concern, as every authenticated request must be reliably mapped back to a specific tenant. This mapping directly influences authorization decisions and downstream infrastructure behavior.

Tenant isolation is primarily achieved using Kubernetes namespaces, complemented by additional controls across AWS services such as IAM, S3, DynamoDB, and Cognito. Data partitioning strategies vary depending on the use case, but a common approach is to use shared storage systems with strict logical separation — for example, tenant-specific prefixes in S3 and partition keys or tables in DynamoDB.

Together, these mechanisms ensure that tenants share infrastructure efficiently while remaining isolated from one another at both the application and data levels.

Operations cover everything required to run the SaaS platform reliably over time. This includes centralized monitoring, logging, profiling, usage analytics, and cost visibility at both the system and tenant levels.

Operational data enables SaaS providers to react to anomalies, optimize performance, and make informed business decisions such as pricing adjustments or feature prioritization. Metering and billing naturally build on top of this layer, bridging technical metrics with business outcomes.

By treating operations as a first-class component of the architecture, SaaS providers gain continuous insight into how the platform is used and how it should evolve.

Pros and cons of using EKS for multi-tenant SaaS system

Amazon EKS offers several advantages for building multi-tenant SaaS platforms, but it also comes with important trade-offs.

Kubernetes namespaces provide a solid foundation for tenant isolation at the compute level, but they are not sufficient on their own. Depending on system complexity, additional isolation and controls are required across IAM, networking, storage, and identity services.

As a managed service, EKS removes the operational burden of running the Kubernetes control plane and provides built-in scalability and high availability. Its tight integration with AWS services such as Elastic Load Balancers simplifies traffic management and service exposure.

The main downside of this model is the shared blast radius. Failures in shared components can impact multiple tenants at once, and the approach works best when tenant infrastructure and feature sets are largely uniform. When tenant requirements vary significantly, silo-based isolation models often provide greater flexibility and risk containment.

In summary, EKS is a strong choice for multi-tenant SaaS platforms that prioritize standardization, scalability, and operational efficiency, as long as its isolation limits are well understood and managed.

Conclusion

In this post, we explored a practical approach to building multi-tenant SaaS applications on Amazon EKS, focusing on tenant onboarding, isolation, and operational concerns. The examples throughout the architecture highlight how business decisions—such as pricing, onboarding experience, and feature standardization—are tightly coupled with technical design choices.

EKS enables SaaS providers to deploy and operate shared infrastructure efficiently while maintaining logical tenant isolation, allowing both new tenants and new features to be delivered quickly. At the same time, this model comes with clear trade-offs around isolation and blast radius that must be carefully managed.

This architecture should be viewed as a reference rather than a one-size-fits-all solution. The core concepts—automation, observability, and alignment between business and technology—are what matter most when designing scalable multi-tenant SaaS platforms.